Online shopping is convenient, but one major challenge remains: how can shoppers confidently see how clothes will look and fit without trying them on? Real-time virtual try-on systems aim to solve this problem by combining speed and accuracy. However, these systems face tough technical demands:

- Speed: To feel responsive, systems must process updates in under 100 milliseconds.

- Accuracy: Visuals must look realistic, with precise textures, sizes, and garment placement.

Shoppers expect smooth, lifelike experiences, and research shows that 98% of users are more likely to buy when AR features influence their decision. However, lag or poor visuals can erode trust and lead to abandoned purchases.

Companies like PixelPanda are addressing these challenges with AI tools that deliver fast, high-quality virtual try-ons. By optimizing model performance, managing latency, and maintaining visual precision, these tools help brands reduce returns and increase conversions. Plans start at $39/month, making this technology accessible to businesses of all sizes.

The key takeaway? Success in virtual try-ons lies in finding the right balance between speed and accuracy to meet the high expectations of today’s shoppers.

What is Latency and How Does It Affect Virtual Try-Ons?

Defining Latency in Virtual Try-On Systems

Latency refers to the delay between a user’s action – like changing a pose or selecting a garment – and the updated visual display. This delay is primarily influenced by the system’s processing power. For virtual try-on systems to feel smooth and responsive, they typically need to achieve around 10 frames per second (FPS). High-performance systems aim to minimize processing delays, ensuring that interactions like fabric stretching or body position adjustments are reflected instantly. Meeting this technical standard is key to delivering a satisfying user experience.

How Delays Impact User Experience

When latency increases, the user experience takes a hit. Slow loading times and laggy visuals can frustrate users, often prompting them to abandon the feature altogether. Consider this: 55% of online apparel shoppers have returned items because the product didn’t look as expected on them. High latency can also cause visual inconsistencies, such as jittering, which erodes user trust in the technology. On the flip side, 98% of shoppers who have used augmented reality (AR) features say the technology directly influenced their buying decisions. This highlights just how important a smooth, real-time experience is for turning curiosity into sales.

"A poorly executed virtual try-on could deter customers rather than facilitate a purchase. If using the tool becomes a hassle… customers might just skip it."

In September 2025, researchers Zaiqiang Wu, I-Chao Shen, and Takeo Igarashi from the University of Tokyo showcased their "ReGarSyn" framework, which utilized temporal dependencies to achieve 10 FPS on a standard PC without any visual jitter.

Reducing latency isn’t just about speed – it’s about maintaining the trust and engagement that make real-time virtual try-ons effective and enjoyable.

Why Accuracy Matters as Much as Speed

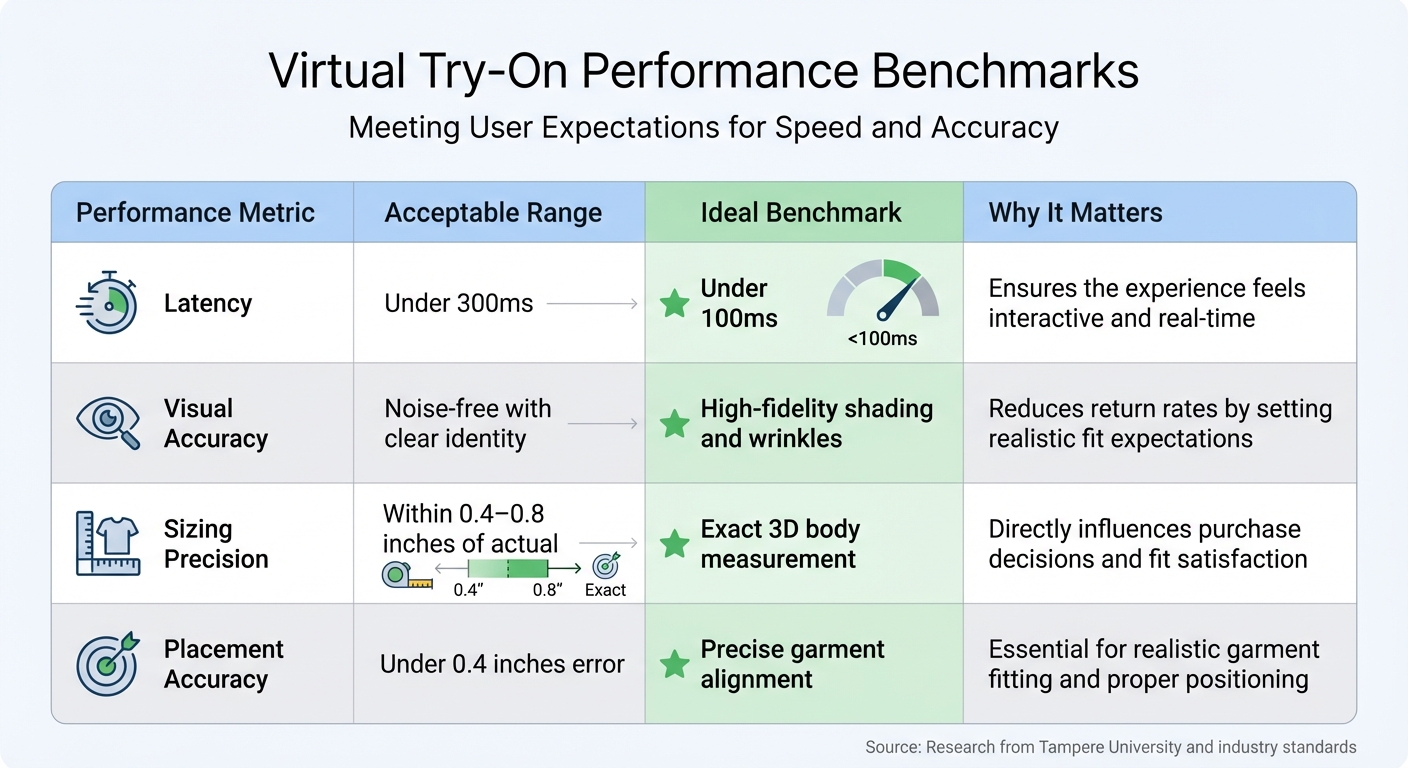

Virtual Try-On Performance Benchmarks: Latency, Accuracy, and Sizing Standards

The Speed vs. Accuracy Trade-Off

Pushing for faster processing often comes at the cost of quality. Systems that prioritize speed can produce visual errors like distorted text, faded textures, and unwanted noise. Research led by Nannan Li highlights this issue, stating, "Generating textures on the human that perfectly match that of the prompted garment is difficult, often resulting in distorted text and faded textures". In other words, a quick virtual try-on is meaningless if the garments look unrealistic.

Accuracy isn’t just about placing a garment on a virtual body – it’s about doing it convincingly. This involves replicating natural bending, shading, and lighting. To win user trust, these systems must preserve non-clothing features, maintain texture precision down to the smallest details, and calculate exact measurements like hip and shoulder dimensions to predict fit. When these elements fall short, users quickly lose confidence in the results.

Studies back this up. In tests using error-aware refinement models to fix localized generation problems, users preferred the higher-accuracy versions 59% of the time. The reason is clear: issues with sizing and fit are the leading causes of high return rates in online fashion. If a virtual try-on tool can’t accurately depict how a garment will fit, it fails to solve one of the biggest pain points in online shopping.

Acceptable Latency and Accuracy Benchmarks

To tackle these challenges, researchers have established clear benchmarks for latency and accuracy. A study from Tampere University found that "there is a trade-off between naturalness, task accuracy and task completion time… participants prefer to use interaction techniques that provide a balance between these three factors". These benchmarks help guide system improvements by setting measurable goals. Key targets include keeping latency below 100ms, ensuring noise-free visuals, maintaining precise sizing, and limiting garment alignment errors to under 0.4 inches.

| Performance Metric | Acceptable Range | Ideal Benchmark | Why It Matters |

|---|---|---|---|

| Latency | Under 300ms | Under 100ms | Ensures the experience feels interactive and real-time |

| Visual Accuracy | Noise-free with clear identity | High-fidelity shading and wrinkles | Reduces return rates by setting realistic fit expectations |

| Sizing Precision | Within 0.4–0.8 inches of actual | Exact 3D body measurement | Directly influences purchase decisions and fit satisfaction |

| Placement Accuracy | Under 0.4 inches error | Precise garment alignment | Essential for realistic garment fitting and proper positioning |

The data makes it clear: latency under 100ms creates the feeling of "real-time" interaction, while maintaining visual accuracy is critical to avoid distortions that could erode trust. For sizing, being within 0.4–0.8 inches of actual body measurements is acceptable, but achieving exact 3D precision is the gold standard for commercial success. These benchmarks must work together – meeting one while falling short on others still leads to a disappointing user experience.

Methods to Reduce Latency While Maintaining Accuracy

Model Optimization and Quantization

One effective way to reduce latency while keeping accuracy intact is by optimizing AI models and using quantization techniques. By converting models from high-precision formats like 32-bit floating-point (FP32) to lower-precision formats such as 8-bit integers (INT8) or even 4-bit (INT4), systems can use less memory and process data faster without compromising visual quality.

For example, TensorRT optimization has shown to double throughput and cut latency in half. Similarly, transitioning from FP16 to INT8 on modern GPUs equipped with tensor cores can more than double inference speeds, with minimal impact on accuracy. This is particularly valuable for applications like virtual try-on systems, where faster garment rendering is crucial while still maintaining fine details.

Precision levels can also be tailored to different components within a model. In the CatVTON framework, unnecessary modules were reduced, significantly cutting parameters while maintaining high-quality outputs. For instance, using 8-bit quantization for the main backbone (like UNet) and 4-bit weight compression for auxiliary parts (such as VAE encoders) can shrink memory usage without noticeable quality loss.

Static quantization, which uses pre-calculated parameters from calibration data, works especially well for image-based models. It’s faster than dynamic quantization, which recalculates parameters during each forward pass. Modern GPUs support these low-precision formats, further speeding up inference without sacrificing quality. These optimizations lay the groundwork for further performance boosts through techniques like improved batching and caching.

Batching and Memory Management

Grouping multiple user requests into batches instead of processing them one by one can dramatically improve efficiency. Dynamic batching, which consolidates individual inference requests into larger batches, allows GPUs to handle them more effectively. This approach has been shown to significantly increase throughput without increasing latency.

Another strategy is deploying multiple instances of a model. By overlapping memory transfer operations with active inference computations, performance can be further enhanced. For example, in an NVIDIA test, increasing the GPU instance count to four for a DenseNet ONNX model boosted throughput to 323.13 inferences per second – a 92% improvement over the default setup.

For video-based virtual try-ons, recurrent synthesis frameworks help maintain consistency across frames while keeping memory usage steady. These frameworks can achieve around 10 frames per second on a standard PC, ensuring smooth and coherent virtual try-on experiences. Alongside model adjustments, efficient request grouping and advanced decoding techniques further enhance system performance.

Speculative Decoding and Caching

Speculative decoding is another method that improves efficiency. It uses a smaller, faster "draft" model to generate initial predictions, which are then refined by a larger, more accurate "target" model. This approach reduces latency and increases throughput by shifting some of the workload to a lightweight model.

Techniques like Batched Attention-optimized Speculative Sampling have demonstrated over a 2× improvement in token generation speed while making optimal use of GPU resources.

Caching also plays a crucial role in reducing computation time. Prefix caching, for instance, reuses previously computed data – like the KV cache – for shared patterns or instructions across similar requests. In virtual try-on systems, if multiple users rely on the same prompts or garment reference patterns, this method can save both time and memory.

Additionally, methods like model warmup and memory-efficient processes such as PagedAttention help minimize cold-start latency and improve throughput by managing cache more effectively. These techniques ensure that virtual try-on systems maintain the high visual fidelity users expect while delivering faster results.

sbb-itb-76ad1b7

How PixelPanda Balances Speed and Accuracy

![]()

AI Fashion Studio Features

PixelPanda’s AI Fashion Studio simplifies the challenge of balancing speed and precision by combining background removal, upscalinghttps://pixelpanda.ai/free-tools/enhance-photo”>upscaling, and generative AI into a single, streamlined workflow. Instead of juggling multiple tools, this platform uses a cloud-based engine to handle everything in one go. This allows e-commerce brands to generate thousands of high-quality product images quickly, without compromising on visual consistency.

The platform’s background removal model excels at handling intricate details, ensuring precise outfit swaps. Its 4x upscaling feature delivers high-resolution images perfect for product pages and marketing materials. Developers can easily integrate this functionality through the REST API, using SDKs for TypeScript or Python, to automate batch processing.

The AI Avatar Studio takes things a step further by creating diverse virtual models, reducing the need for time-consuming and costly physical photoshoots. This feature also supports outfit swapping, enabling brands to showcase garments on different virtual models instantly. To make it even easier for businesses to explore these tools, PixelPanda offers 10 free credits – no credit card required – so you can try out the virtual try-on features before committing to a paid plan.

All of these tools are available through flexible credit-based plans, designed to grow alongside your business.

Pricing Plans for Small Businesses

PixelPanda operates on a credit-based pricing model. Here’s a breakdown of the plans:

- Starter Plan: $39/month (billed annually) for 7,000 credits

- Growth Plan: $59/month for 15,000 credits

- Pro Plan: $89/month for 35,000 credits

Each plan includes commercial usage rights and supports both image and video outputs. However, unused credits don’t carry over to the next month, so it’s important to select a plan that matches your monthly production needs.

PixelPanda’s seamless integration of advanced technology and an intuitive user experience demonstrates how businesses can achieve efficiency without cutting corners.

Conclusion

Finding the right balance between speed and accuracy in virtual try-on technology is crucial for both technical performance and business success. Studies show that implementing this technology can boost conversion rates by up to 94%, while accurate try-ons significantly cut down on return rates. The goal? Create an experience that feels instantaneous – ideally running at 20 FPS or higher – while delivering lifelike visuals that give shoppers the confidence to hit "buy."

Achieving this balance requires smart optimization strategies. Prioritizing speed too much can result in jittery, unconvincing visuals, while focusing solely on accuracy can slow performance to a crawl. The most effective solutions blend techniques like model optimization, efficient memory use, and strategic caching to deliver both speed and realism.

PixelPanda’s AI Fashion Studio addresses these challenges head-on. With features like integrated background removal, upscaling, and generative AI, it offers a seamless experience. Plans start at $39/month for 7,000 credits, making high-quality AR tools accessible to businesses of all sizes.

Consumer demand is driving this innovation. A striking 72% of luxury fashion shoppers now consider AR an important part of their shopping journey. To meet these expectations, businesses need technology that doesn’t force a trade-off between speed and accuracy. By delivering fast, reliable, and visually convincing virtual try-ons, companies can reduce cart abandonment, build trust, and ultimately drive more sales – all while meeting the high standards of today’s shoppers.

FAQs

How do virtual try-ons enhance online shopping?

Virtual try-ons are changing the way we shop online by letting customers see how clothes or accessories will look and fit on their bodies or lifelike avatars. This adds a personal and interactive touch to the shopping experience, making it easier for people to feel confident about their choices while easing worries about size, fit, or style.

These tools rely on advanced AI to create realistic visuals, capturing details like fabric textures, patterns, and how the clothing fits. By offering accurate, real-time previews, virtual try-ons help bridge the gap between the convenience of online shopping and the tactile experience of in-store browsing. The result? Shoppers enjoy a smoother experience, and retailers benefit from happier customers and fewer returns. It’s a win for everyone.

What are the key challenges in creating real-time virtual try-on systems?

Real-time virtual try-on systems come with a set of challenges that influence their speed, precision, and overall usability. One of the biggest obstacles is ensuring accurate performance across a wide range of variables, including different lighting conditions, skin tones, and body shapes. These factors can significantly impact the effectiveness of AR and computer vision algorithms. Replicating how garments fit realistically – especially loose or intricate clothing – adds another layer of complexity. This requires advanced 3D modeling to prevent problems like misaligned visuals or awkward distortions.

Another pressing issue is performance efficiency. These systems need to provide smooth, real-time interactions while maintaining high-quality visuals, which calls for cutting-edge algorithms and significant computational resources. On top of that, privacy concerns about facial data demand robust security measures, such as processing data locally, to safeguard user information. Striking the right balance between speed, realism, and data security remains a challenging yet essential aspect of advancing this technology.

How does PixelPanda deliver fast and accurate virtual try-ons?

PixelPanda uses cutting-edge AI technology and fine-tuned processing to offer real-time virtual try-ons that are quick and impressively precise. It carefully maintains garment details, natural draping, and realistic lighting, all while adjusting effortlessly to various poses and body shapes.

This combination of speed and precision ensures users enjoy a seamless, visually accurate try-on experience without interruptions – making it an excellent tool for presenting products in a polished and captivating manner.